The OpenClaw Era: Everything Demand Forecasting Practitioners Need to Know

OpenClaw is an open-source agent framework that gives AI language models like GPT and Claude the ability to see and act. With it, AI doesn't just answer questions — it can autonomously plan and execute entire workflows, from logging into ERP systems and collecting data to updating forecasts and placing inventory orders. The project has racked up over 145,000 stars on GitHub, instantly becoming one of the hottest topics in the applied AI space. At the same time, concerns around data leakage and security vulnerabilities are mounting among enterprises both in Korea and globally.

Amid these conflicting signals, the same question is on every demand forecasting practitioner's and decision-maker's mind: our global competitors are already generating results with AI agents — how should we evaluate this for our own organization?

This article aims to provide a practical answer to that question. It covers how OpenClaw is transforming demand forecasting workflows, how global leaders have structured their agent-based systems to deliver results, what the security threats actually look like, and where organizations without formal governance frameworks can realistically start. Now more than ever, the critical question isn't which technology to adopt, but how to design its deployment. We hope this article serves as a reference point for making that call.

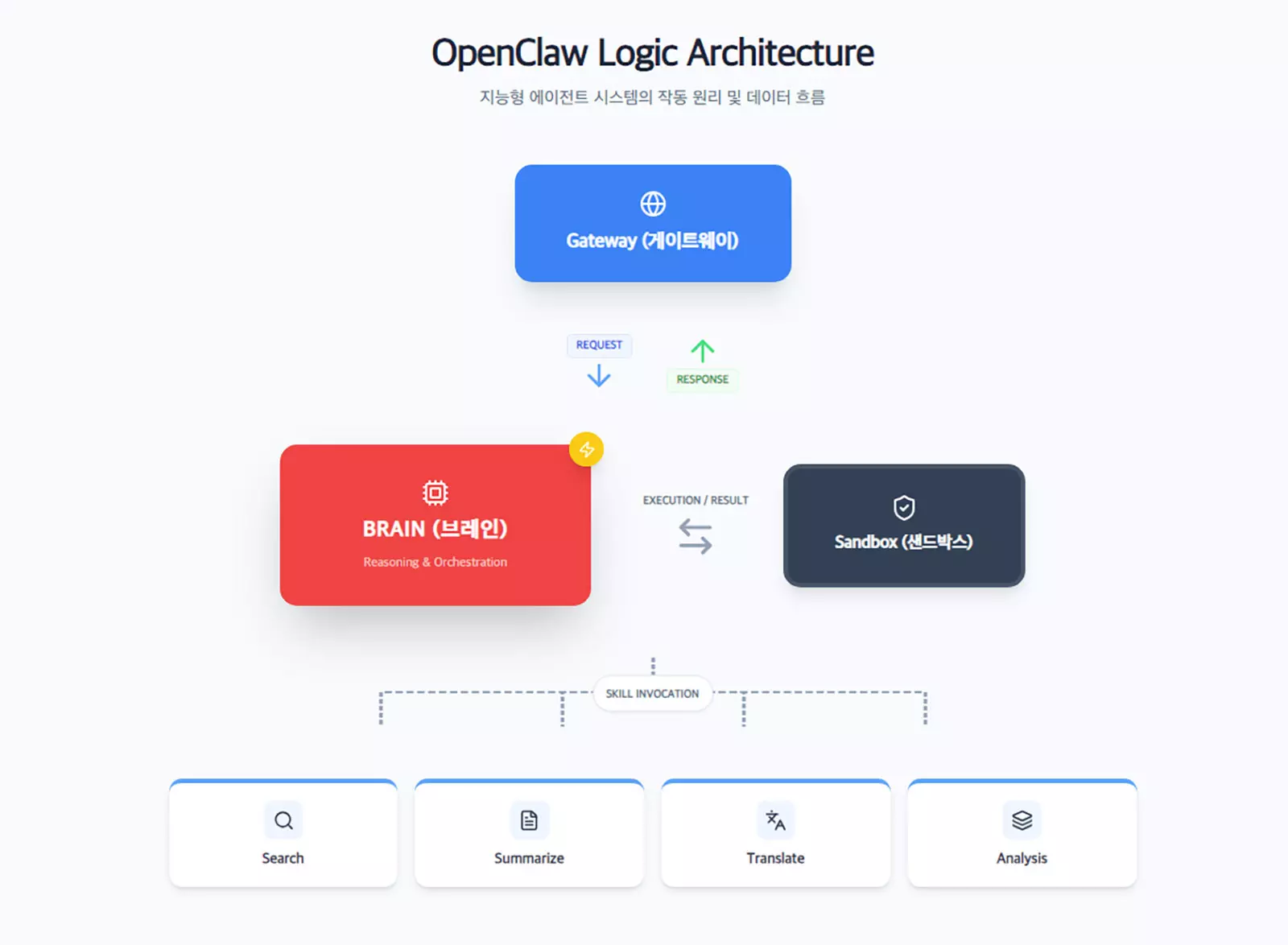

OpenClaw as an Agent Framework

The Difference Between Language Models and Agent Frameworks

To understand OpenClaw, we first need to clear up a common misconception. OpenClaw is not a language model like GPT or Claude. It's an open-source agent framework that gives existing AI language models the ability to see and act. If a language model is a tool that answers questions, an agent framework is a platform that enables that model to formulate plans, connect to external systems, and execute tasks on its own.

What This Means for Demand Forecasting

The implications for demand forecasting practitioners are clear. An agent can log into the ERP system every morning before dawn, automatically pull the previous day's sales data, and collect weekly weather forecasts from meteorological services. It then feeds all of this into the forecasting model to refresh SKU-level demand projections — the entire process executed without a single human click.

There's another dimension worth noting: the agent can remember which items had the largest forecast errors and, the next day, proactively broaden its search for external variables related to those items — continuously improving on its own.

This is fundamentally different from conventional automation. Traditional automation repeats predefined rules on a loop. An agent assesses the situation and decides what to do next on its own. In effect, it's a technology that can progressively take over the judgment calls and decisions that practitioners previously had to make manually across the forecasting process.

How Global Leaders Are Deploying AI Agents

Walmart's Self-Healing Inventory System

Walmart doesn't rely on a single AI forecasting model in isolation. Multiple specialized digital agents assess deliveries, order volumes, and timing in real time, continuously adjusting forecasts in response to weather, traffic, and demand shifts.

For perishables, forecasts are revised multiple times a day to minimize unnecessary waste. One-off anomalies that appear unexpectedly are automatically "forgotten" so they don't distort future predictions. When inventory depletes faster than expected, AI autonomously adjusts replenishment schedules and redesigns logistics flows — a "self-healing" system. Indira Uppuluri, Walmart's VP of Supply Chain Technology, describes it as "diverse intelligence driving every stage of the supply chain."

Hyper-Granular Forecasting Strategies at Amazon, Unilever, and P&G

Amazon launched a new large-scale AI forecasting model in June 2025, predicting demand for over 400 million products daily across the U.S., Canada, Mexico, and Brazil. By incorporating time-varying signals — weather, holidays, regional preferences — alongside historical sales, the company improved long-range, country-level forecast accuracy for deal events by 10% and regional accuracy for top-selling items by 20%.

The collaboration between Unilever and Walmart Mexico is equally noteworthy. By automatically ingesting up to five years of SKU-, store-, and day-level data, the system generates 3.1 million forecast combinations daily and uses them to auto-replenish 20 million cases across Mexico. The result: a 98% fill rate and 12% revenue growth within a single year.

P&G has also adopted granular forecasting down to the individual product and store level, lifting annual forecast accuracy by 24 percentage points, cutting stockout rates by 15%, and projecting $2.3 billion in annual cost savings from AI optimization alone.

The common thread across these cases is clear: none of them depend on a single forecasting model. Specialized agents for data collection, forecast execution, inventory adjustment, and anomaly detection work in concert, while humans focus on exceptions and high-stakes decisions. Agent-based demand forecasting has moved well beyond the concept stage — it's actively running in production.

Why Korean Enterprises Locked the Door — and the Real Threats Behind It

A Wave of Internal Bans

On February 8, 2026, Naver, Kakao, and Karrot simultaneously banned OpenClaw from their internal networks. Woowa Brothers (Baemin) followed three days later. Kakao's official statement cited the need "to protect the company's information assets by restricting OpenClaw usage on internal networks and work devices." Woowa Brothers' CISO explained the move as "a measure to block potential data leakage channels and protect user information."

Security Threats That Demand Forecasting Agents Must Address

The security concerns these companies have are anything but abstract. OpenClaw agents inherit the full permissions of the user account they operate under, gaining unrestricted access to emails, source code, and confidential documents. There's a real risk that this collected data could be transmitted to external AI servers without any filtering.

The most critical threat is known as indirect prompt injection. If a malicious instruction is embedded in a document, email, or web page that the agent reads, the agent may interpret it as a legitimate command and execute unintended actions. In February 2026, a publicly demonstrated exploit showed an OpenClaw agent exfiltrating and deleting user files after encountering a malicious prompt injected into a Google Doc.

The architecture of demand forecasting agents is inherently vulnerable to this type of attack. Consider an agent designed to read supplier emails, collect market data, and place orders in an ERP system — it possesses the ability to search for information, gather external data, and directly manipulate enterprise systems. It's precisely this combination of capabilities that raises red flags for security professionals.

This is especially critical for Korea's manufacturing, retail, and food industries, where highly sensitive information — production plans, raw material procurement data, supplier pricing — is concentrated within demand forecasting workflows. Any agent deployment must be preceded by a thorough security design review.

The Core Elements of Agentic AI Governance

Locking the door entirely and keeping it open with proper controls are fundamentally different approaches. As of 2026, frameworks for achieving the latter are evolving rapidly.

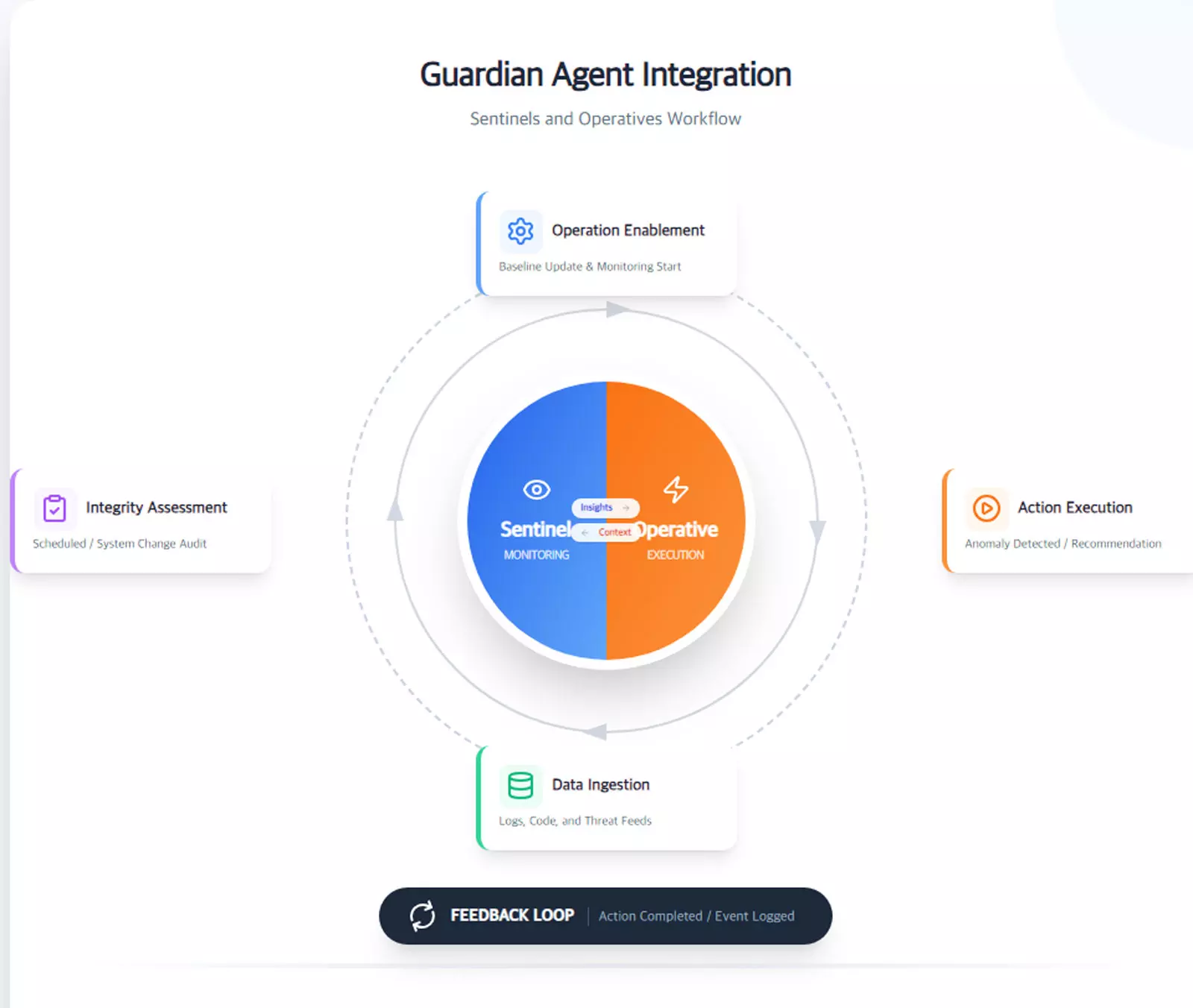

Guardian Agents: Agents That Watch the Agents

In June 2025, Gartner introduced the Guardian Agent concept, which captures the direction governance is heading. The premise is straightforward: humans can no longer keep pace with the speed of agents, so the solution is to deploy another layer of agents to monitor them. Guardian Agents fall into three roles: Reviewers that evaluate the accuracy and appropriateness of AI-generated outputs, Monitors that observe and log agent behavior to trigger necessary follow-up actions, and Protectors that can directly adjust or block an agent's permissions and actions during task execution.

Gartner VP Avivah Litan noted that "the rapid autonomy of agents demands new responses that go beyond traditional human oversight."

Risk-Tiered Human Approval and Data Lineage Tracking

Effective governance design rests on two critical pillars.

The first is a tiered human approval structure based on risk level. Routine forecast refreshes and small-scale auto-replenishment orders can be handled autonomously by the agent. High-impact decisions — large-volume commitments for new product launches, or items where forecast confidence drops below a set threshold — require mandatory human sign-off before execution. The key isn't automating everything or manually reviewing everything; it's drawing a clear line between autonomy and human intervention based on risk.

The second pillar is data lineage tracking. You need to be able to answer, at any time, "What POS data, weather data, and promotional schedule was this forecast based on?" This matters not only for internal audit purposes but is also essential for meeting the explainability requirements mandated by the EU AI Act.

An AI security management system that monitors in real time which systems an agent accesses and what data it transmits externally should also be part of the infrastructure.

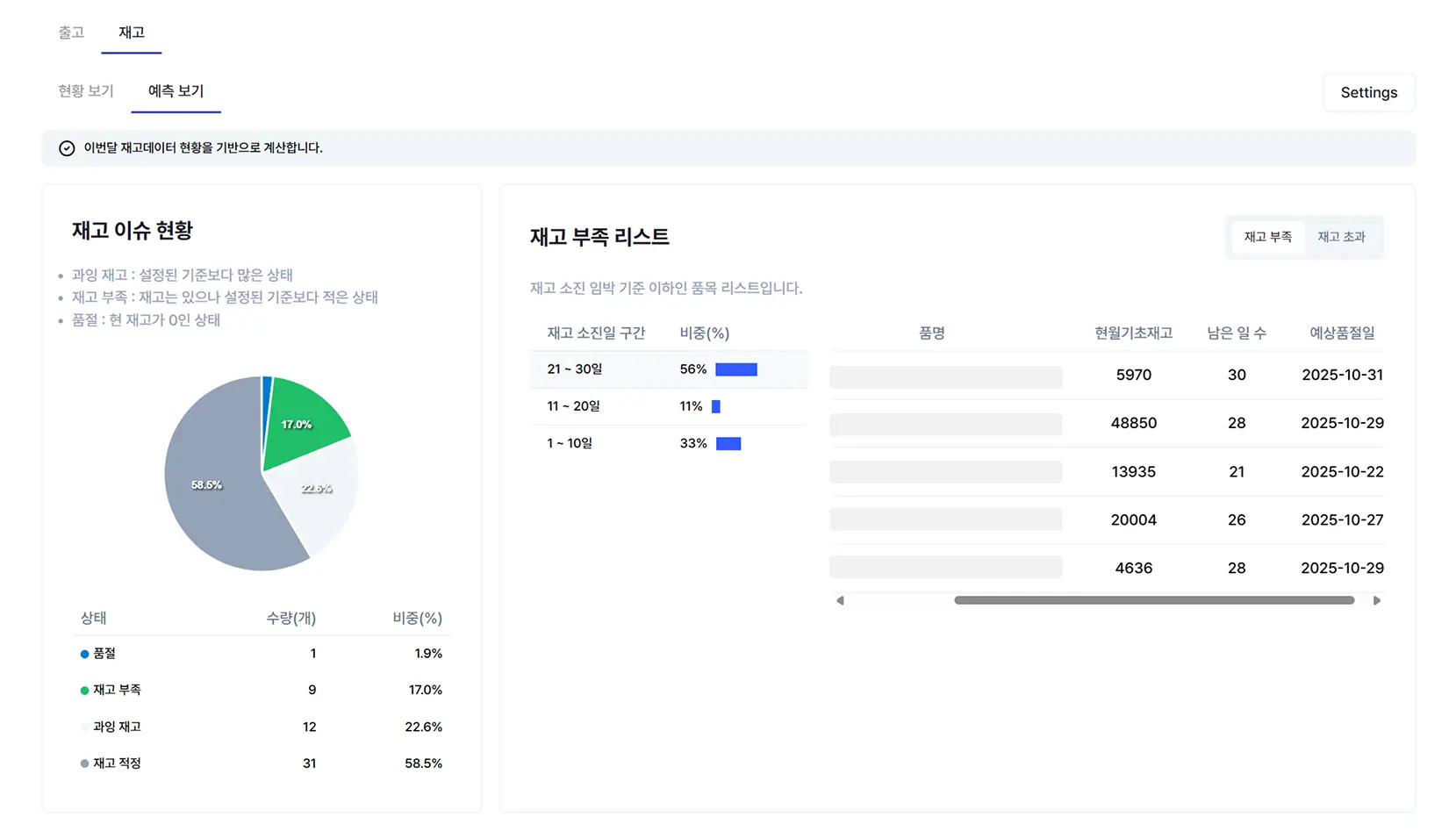

How AI Agents Can Be Applied in Demand Forecasting

When we take the agentic AI possibilities discussed above and apply them concretely to demand forecasting, the most compelling area is the synergy between forecasting models and LLM agents. Because these two technologies serve fundamentally different roles, using them together actually compensates for each other's limitations.

Division of Labor: Forecasting Models vs. LLM Agents

The most critical task in demand forecasting is extracting precise future sales volumes from time-series data. This is not something an LLM can replace. LLMs are exceptional at understanding and generating text, but they were never designed to calculate next-quarter shipment volumes at the SKU level by combining hundreds of variables.

Purpose-built forecasting models — like ImpactiveAI's Deepflow — learn from internal sales data, macroeconomic indicators, weather patterns, and promotional histories to produce high-precision demand forecasts. Over 200 deep learning and machine learning models, backed by 72 patents, underpin that precision.

This is where the AI agent's role begins. Rather than simply displaying the forecasting model's outputs and data, an LLM-powered agent translates this information into insights that practitioners can intuitively understand and act on.

Imagine a practitioner asking, "Which SKUs have the highest inventory risk next month?" The agent interprets Deepflow's forecast results, summarizes the at-risk items, compares them against similar historical cases, and even suggests mitigation strategies. When different departments need different information, the agent can auto-generate tailored reports — highlighting opportunity items for the Sales team and risk items for the SCM team. The forecasting model produces the numbers; the LLM agent transforms those numbers into actionable decisions.

The technical groundwork for this synergy is already being laid in practice. From automated interpretation of forecast outputs to real-time natural language alerts when anomalies are detected and department-specific report generation — demand forecasting technology is advancing rapidly in the form of tightly integrated forecasting engines and AI agents.

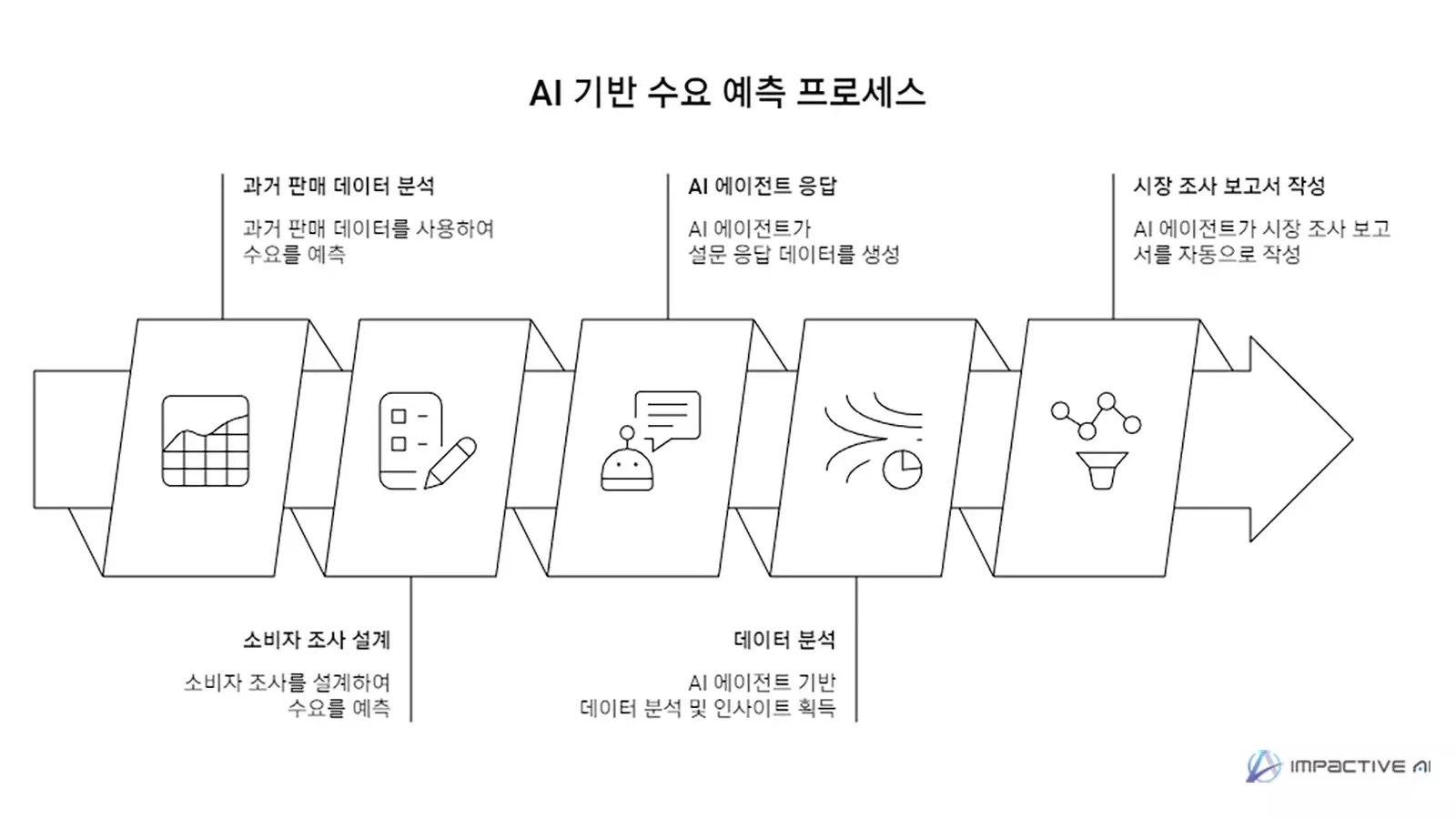

Revolutionizing Consumer Research with AI Agents

Demand forecasting isn't built on historical sales data analysis alone. Consumer surveys and preference research play a vital role in reading demand ahead of time — especially for new product launches or market entries where there's simply no historical data to reference.

But under conventional methods, the full cycle of designing surveys, recruiting panels, collecting responses, analyzing results, and writing reports takes weeks to months. The costs are substantial. And given how fast markets move, the findings are often outdated by the time they're ready.

AI agents can turn this entire process on its head. LLM agents designed with target consumer personas respond to surveys and generate thousands of response data points in a fraction of the time. Auto-generated market research reports based on this data represent a step-change improvement in both time and cost compared to traditional approaches.

ImpactiveAI's MarketView is a platform that systematically collects and analyzes this kind of consumer research data. Adding AI agents to the mix unlocks even greater potential: persona-based consumer response data generated by agents can be fed into Deepflow's forecasting models as training data, improving forecast accuracy even in data-scarce scenarios like new product launches.

The end-to-end flow works like this: LLM agents conduct persona-driven consumer research, MarketView and NPD modules structure that data, and Deepflow incorporates it into model training to forecast demand for new products. With each stage tightly linked, organizations can achieve levels of speed and accuracy that were previously unimaginable.

How Demand Forecasting Professionals Should Navigate the OpenClaw Era

Agentic AI has the potential to drive sweeping change across demand forecasting and supply chain management as a whole. But AI agents alone can't fully replace the quantitative forecasting that sits at the heart of the discipline, and relying solely on forecasting models leaves practitioners without the intuitive, actionable insights they need.

Forecasting models extract precise numbers from data. AI agents turn those numbers into decisions people can act on. In consumer research, they compress timelines that used to stretch across weeks. These converging capabilities will become a defining competitive differentiator in demand forecasting going forward.

For demand forecasting professionals at Korean manufacturing, retail, and food companies evaluating agentic AI adoption, we recommend starting with three checkpoints.

First, assess how much of your workload consists of automatable repetitive tasks. If routine activities like regular data collection, report generation, and forecast distribution account for more than 30% of your team's work, agent-based automation can deliver meaningful impact.

Second, design human review gates into critical decision processes. High-stakes decisions — bulk orders, initial volumes for new product launches, raw material procurement timing — should require mandatory human sign-off before agent execution, given their direct financial impact.

Third, establish clear data security boundaries. Define upfront which information agents can access and which must remain off-limits, and ensure robust controls are in place for any data exchange with external AI servers.

Organizations that clearly understand both the potential and the limits of the technology, prepare airtight security and governance, and take a phased approach will be the ones that navigate this period of transformation with confidence.

.svg)

%202.svg)

.svg)

.svg)

.svg)